RTO and RPO are two terms that must sound familiar for database professionals, but not everybody know that a good database design can dramatically improve our recovery time in case of disaster Usually it’s said the most important duty of DBA’s is to be able to guarantee the integrity of the data of the business we work for.

I subscribe those words too, and probably the vast majority of the people I know that do this for a living. That’s why sometimes we can be a bit too rough when speaking about our servers or databases, but that is a different story.

At the same time we tend to think if we do backups we are safe and that will be true as long as we verify that they actually work, but is that enough? How long would it take to get you back in business if something fatal happens to your biggest database, let’s say database corruption?

There is fancy and expensive gear to keep you up and running for a lot of money and man power to make it work smoothly, but not every shop can afford fail over clusters and AG replicas seasoned with SQL Server Expensive edition, SAN storage or flash arrays.

Some people have a much more humble hardware and their data is as important as any other, and their businesses will be affected if databases are down, so what can they do? Exactly, having a good database design along with a good restore strategy.

Files and Filegroups

Databases in SQL Server can be logically divided into filegroups which contain physical files where our data lives. And it’s important knowing all the benefits that can bring us a bit of planning things ahead, before it’s too late.

By default and at least, every database have a filegroup called PRIMARY where all the database’s metadata lives and all the user data will be unless we specify otherwise.

That is an important point because, as I will show you in a minute, if we have a good logical and physical design, we can get back in business earlier than you think.

According to Books Online:

There are two types of filegroups:

Primary

The primary filegroup contains the primary data file and any other files not specifically assigned to another filegroup. All pages for the system tables are allocated in the primary filegroup.User-defined

User-defined filegroups are any filegroups that are specified by using the FILEGROUP keyword in a CREATE DATABASE or ALTER DATABASE statement.

So if we don’t create additional filegroup[s] in our databases not only all the data will go to the same logical container but also in case we have to restore that database from a backup, we will have to wait until all of it it’s restored.

Imagine you have a lot of historical data for instance and there is a disaster, if you had different filegroups, one for current data and another for the historical, you would be able to get your live data first and quickly (to get you up and running), and then restore all the historical which is not critical.

To show you how, I’m going to create a database with different filegroups so you’ll see how we can do.

USE master

GO

IF DB_ID('FileGroupTest') IS NOT NULL BEGIN

ALTER DATABASE FileGroupTest SET SINGLE_USER WITH ROLLBACK AFTER 10

DROP DATABASE FileGroupTest

END

GO

CREATE DATABASE FileGroupTest

CONTAINMENT = NONE

ON PRIMARY

( NAME = N'FileGroupTest_primary', FILENAME = N'C:\Program Files\Microsoft SQL Server\MSSQL13.MSSQL2016\MSSQL\DATA\FileGroupTest_primary.mdf', SIZE = 16MB , MAXSIZE = UNLIMITED, FILEGROWTH = 16MB ),

FILEGROUP USER_DATA

( NAME = N'FileGroupTest_USER_DATA_01', FILENAME = N'C:\Program Files\Microsoft SQL Server\MSSQL13.MSSQL2016\MSSQL\DATA\FileGroupTest_USER_DATA_01.ndf' , SIZE = 16MB , MAXSIZE = UNLIMITED, FILEGROWTH = 16MB ),

( NAME = N'FileGroupTest_USER_DATA_02', FILENAME = N'C:\Program Files\Microsoft SQL Server\MSSQL13.MSSQL2016\MSSQL\DATA\FileGroupTest_USER_DATA_02.ndf' , SIZE = 16MB , MAXSIZE = UNLIMITED, FILEGROWTH = 16MB ),

FILEGROUP MORE_USER_DATA

( NAME = N'FileGroupTest_MORE_USER_DATA_01', FILENAME = N'C:\Program Files\Microsoft SQL Server\MSSQL13.MSSQL2016\MSSQL\DATA\FileGroupTest_MORE_USER_DATA_01.ndf' , SIZE = 16MB , MAXSIZE = UNLIMITED, FILEGROWTH = 16MB ),

( NAME = N'FileGroupTest_MORE_USER_DATA_02', FILENAME = N'C:\Program Files\Microsoft SQL Server\MSSQL13.MSSQL2016\MSSQL\DATA\FileGroupTest_MORE_USER_DATA_02.ndf' , SIZE = 16MB , MAXSIZE = UNLIMITED, FILEGROWTH = 16MB )

LOG ON

( NAME = N'patbasexmlstorage_RAG_heaps_log', FILENAME = N'C:\Program Files\Microsoft SQL Server\MSSQL13.MSSQL2016\MSSQL\DATA\FileGroupTest_log.ldf' , SIZE = 16MB , MAXSIZE = 2048GB , FILEGROWTH = 1048576KB )

GO

ALTER DATABASE FileGroupTest MODIFY FILEGROUP USER_DATA DEFAULT

GO

See how I specified the filegroup [USER_DATA] to be the new default so even if someone forgets, new tables won’t go to [PRIMARY].

Now let’s create some tables, one of each filegroup.

USE FileGroupTest GO CREATE TABLE dbo.t_user_data( Id INT NOT NULL IDENTITY PRIMARY KEY , col1 CHAR(20) NOT NULL DEFAULT 'A' ) ON USER_DATA CREATE TABLE dbo.t_more_user_data( Id INT NOT NULL PRIMARY KEY , col1 CHAR(20) NOT NULL DEFAULT 'A' , col2 CHAR(20) NOT NULL DEFAULT 'B' , col3 CHAR(20) NOT NULL DEFAULT 'C' , col4 CHAR(20) NOT NULL DEFAULT 'D' , col5 CHAR(20) NOT NULL DEFAULT 'E' , col6 CHAR(20) NOT NULL DEFAULT 'F' ) ON MORE_USER_DATA GO INSERT INTO dbo.t_user_data DEFAULT VALUES INSERT INTO dbo.t_more_user_data (Id) VALUES (SCOPE_IDENTITY()) GO 1000 SELECT * FROM dbo.t_user_data SELECT * FROM dbo.t_more_user_data GO BACKUP DATABASE FileGroupTest TO DISK = 'FileGroupTest_01.bak' WITH INIT GO

Now once we have some data and made a full backup it’s time to perform what it’s called piecemeal restore, where one by one we will be putting the different filegroups online, having access to the data that contain.

USE master GO RESTORE DATABASE FileGroupTest FILEGROUP = 'PRIMARY' FROM DISK = 'FileGroupTest_01.bak' WITH PARTIAL, REPLACE, NORECOVERY GO

The key here that we specify PARTIAL which allow us to restore later the rest of the filegroups that belong to this database. Take in mind that if we left [PRIMARY] to store just the database’s metadata, it’ll be quite small and will restore very quickly.

When it’s done the database will be online and users will be able to connect to it. Let’s check.

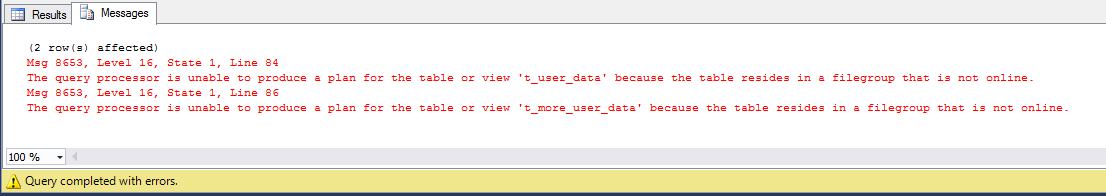

USE FileGroupTest GO SELECT * FROM sys.tables GO SELECT TOP 1 * FROM dbo.t_user_data GO SELECT TOP 1 * FROM dbo.t_more_user_data GO

First query will return two rows from sys.tables, but as soon as we want some user data… boom! Now we’re going to restore mission critical data in [USER_DATA]

USE master GO RESTORE DATABASE FileGroupTest FILEGROUP = 'USER_DATA' FROM DISK = 'FileGroupTest_01.bak' GO

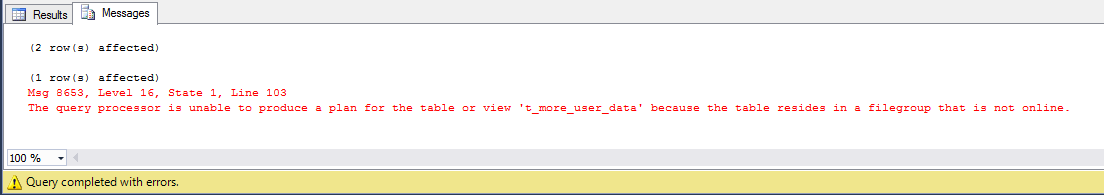

Now our tables within [USER_DATA] filegroup will be accessible.

USE FileGroupTest GO SELECT * FROM sys.tables GO SELECT TOP 1 * FROM dbo.t_user_data GO SELECT TOP 1 * FROM dbo.t_more_user_data GO

Once we have our users working again, we can restore this less critical data.

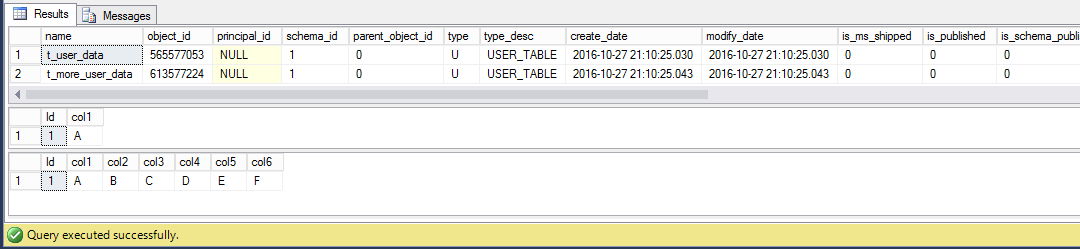

USE master GO RESTORE DATABASE FileGroupTest FILEGROUP = 'MORE_USER_DATA' FROM DISK = 'FileGroupTest_01.bak' GO USE FileGroupTest GO SELECT * FROM sys.tables GO SELECT TOP 1 * FROM dbo.t_user_data GO SELECT TOP 1 * FROM dbo.t_more_user_data GO

And finally we have all our data back, step by step, filegroup by filegroup, allowing users to access the most critical data first and then that less critical or historical.

Conclusion

When it’s time to get back after being hit by a disaster, we can choose between solutions which are cool and expensive, or if we are a bit tight, we can follow some best practices and be a bit creative to minimize our recovery time.

The technique explained above can really improve our recovery time objective (RTO) to match our Service Level Agreement (SLA), which means happy bosses and paychecks coming at the end of the month 🙂

As always just to wrap up, some useful links

– Database files and filegroups (Books online)

– RESTORE (TSQL)

– Piecemeal Restore (TSQL)

Thanks for reading

Very nice and simple explanation of a great feature that gets overlooked far too often. I would add that when there is archived tables in a db, put them on their own filegroup. They can be restored last.

Finally is piecemeal restore not an Enterprise feature?

Thanks for your comment! It is enterprise only I’m afraid, please see https://msdn.microsoft.com/en-us/library/ms177425.aspx for complete reference.

Cheers!