In this post I want to share my opinion about using UNICODE data types by default for strings stored in SQL Server

This post is part of the T-SQL Tuesday, which is a monthly blog party on the second Tuesday of each month. Everyone is welcome and have the chance to write about SQL Server.

This month’s host is Aaron Bertrand (B|T) and the topic can be chosen between telling something you are passionate about outside the SQL Server world, or to choose amongst his index of bad habits, which one of them really gets under my skin.

Background

As the title of the post announces, there is an ongoing debate about the use of UNICODE, and like any other debate there are two different strong positions, for and against the use.

I will try not to get so emotional and just give some examples why it in a BAD idea to indiscriminately use UNICODE data types just in case.

Example 1, ISO codes

Obviously everyone understands that these fall into the bag of you are 100% sure it will never require UNICODE.

We can have here currencies, countries, languages and so on, usually are not very long just a couple or three characters long, depending on which code we use.

But as always we need to think big, you create the countries table with ~200 rows, so what is the big deal? Well, if you decide to make the country code the primary key, then you have your problem right there.

Every table where you have a foreign key to the countries table, for example [dbo].[PhoneListing] which has 100M rows inherits that column, now you see trouble, don’t you?. In rough figures 2 bytes * 100M = ~190MB of extra space wasted in just one table.

But not only the waste is in storage, which people might think it is cheap, it’s in your buffer pool every time you read one of those pages into memory, it’s in your transaction log every time you update it, even on your network if it happens you have AG’s or mirroring!!

Example 2, Dates

Yes, you have read it… I see dates stored as NVARCHAR(10) and NCHAR(10) on daily basis, please don’t ask me why.

This case is even worse, because DATE takes 3 bytes where NCHAR(10) takes 20 bytes, yes Ladies and Gentlemen more than 6 times more space to store the same data.

But wait! how can you be certain that those ten characters are actually a valid date? You can’t, unless you reinvent the wheel and validate that those dates are obviously valid dates and pay the performance penalty of doing it.

Using the previous example, this one gets even better when you multiply by eighteen, 18 bytes * 100M = ~1.6GB Wow now we’re talking.

SQL Server 2016 SP1 to the rescue

But not everything is lost, because if you take ownership of a system that is implemented in such way and there is no chance to change it, you still can live with it and try not to waste so much space.

I said SQL Server 2016 SP1 for a reason, not because what I’m going to tell you about didn’t exists before, but it was in that version when the feature became available to all different editions and that is, compression.

I wrote a blog post some time ago in how I managed to save tons of space by using ROW compression.

As mentioned in the post ROW compression will make your non-unicode characters to go back to just one byte storage. You will pay the penalty in CPU usage of course, but sometimes it is worth it. And now everyone can use it.

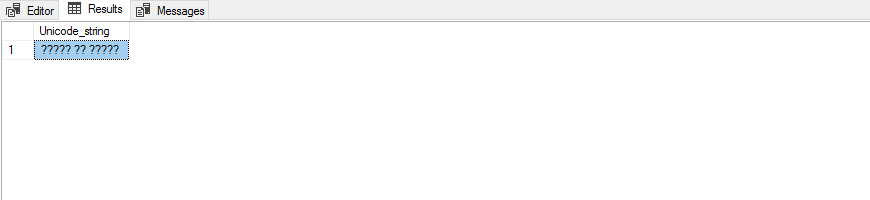

Unicode Literals

This is my favourite. I remember that day a developer was really annoyed because his data was “corrupted” because the fields in his table where full of question marks. 🙂

Something like this

DECLARE @Unicode_string NVARCHAR(50) = 'какво ще бъда?' SELECT @Unicode_string AS Unicode_string

I still laugh at this, because we made him write in a post-it and stick to his monitor the following:

N”

So at the end even if you provisioned everything correctly, you might be facing the classic layer 8 error.

Conclusion

You can see that there are more than reasonable uses for UNICODE, that’s why they exist, but sometimes they are just a no-no.

This has been better than going to therapy, what a relieve!

Just to finish thanking Aaron for hosting this month’s event and you guys for reading

Thanks!

Great article…I really enjoyed reading.

People can always come up with a “storage is cheap nowadays” answer. Whether this is correct or not most do not think about RAM (buffer pool) and if things go worse all these extra space tends to add up soon. Have a look at my contribution at http://www.martinguth.de/database-administration/why-i-chose-int-rather-than-bigint-for-id-columns/ … I also wrote about saving some space regarding ID columns.

Thanks for your comment! I read your post and you’re absolutely right, and to exhaust values for the INT data type will take you 13 years inserting 10 rows per second for instance.

There are systems that probably would do it, but not so many.

Cheers!