This is not the first time I write about best practices or how a good design can help even from a performance point of view, but today I’ll go to an earlier stage in database design, choosing data types This is not the first time I write about best practices or how a good design can help even from a performance point of view, but today I’ll go to an earlier stage in database design, choosing data types.

In general, we want always to use the smallest data type possible in order to minimize storage requirements.

Numeric or date/time data types might be a bit more complicated since there are several and depend on the different ranges and precision, we can find the reference in Books Online.

But if we speak about alphanumeric data, the choices are more limited, we can only choose between fixed or variable length and to allow UNICODE or not. Even though it may sound not a big deal, the consequences can lead us to… downtime.

Set up the playground

As I said choosing the smallest data type is usually a good idea, but we need to know well our data and foresee future requirements.

It’s also a common believe that we only will have problems if we go the way from a big data type to a smaller one. This is not true, we can have problems the other way too due to how SQL Server internally will handle the change.

Let me show you from the beginning so let’s create a table with a VARCHAR column that later we will convert to NVARCHAR

**All these demos are run in SQL Server 2016 Developer Edition.

CREATE DATABASE var_to_nvar GO USE var_to_nvar GO CREATE TABLE dbo.t ( ID INT IDENTITY PRIMARY KEY , var_col VARCHAR(255) ) GO INSERT INTO dbo.t SELECT TOP (100) 'aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa' FROM sys.all_columns AS t1 GO

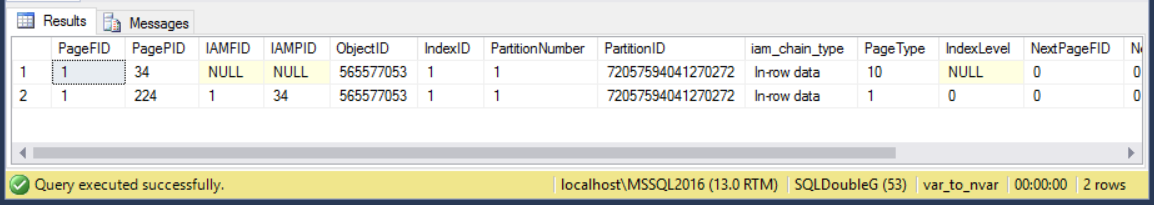

Now that we have our table, we can see some internals like how many pages are allocated to the clustered index.

DBCC IND ('var_to_nvar', 'dbo.t', 1)

-- 1 data page, 224

If we look inside this page, we can see how the data is internally stored. We need to know the database id and activate trace flag 3604 for the current session to see the output of DBCC PAGE()

SELECT DB_ID() -- 10 DBCC TRACEON (3604) DBCC PAGE(10, 1, 224, 3) /* Slot 0 Offset 0x60 Length 53 Record Type = PRIMARY_RECORD Record Attributes = NULL_BITMAP VARIABLE_COLUMNS Record Size = 53 Memory Dump @0x0000000CDDE7A060 0000000000000000: 30000800 01000000 02000001 00350061 61616161 0............5.aaaaa 0000000000000014: 61616161 61616161 61616161 61616161 61616161 aaaaaaaaaaaaaaaaaaaa 0000000000000028: 61616161 61616161 61616161 61 aaaaaaaaaaaaa Slot 0 Column 1 Offset 0x4 Length 4 Length (physical) 4 ID = 1 Slot 0 Column 2 Offset 0xf Length 38 Length (physical) 38 var_col = aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa Slot 0 Offset 0x0 Length 0 Length (physical) 0 */

See how every ‘a’ is represented by the byte ’61’ so we have as many as thirty eight of them.

Changing the data type

If it wasn’t the need of storing UNICODE or you didn’t ask the right questions before creating your table, you can find yourself having to change the data type.

You’ll see soon how this is a problem.

USE var_to_nvar GO BEGIN TRAN ALTER TABLE dbo.t ALTER COLUMN var_col NVARCHAR(255)

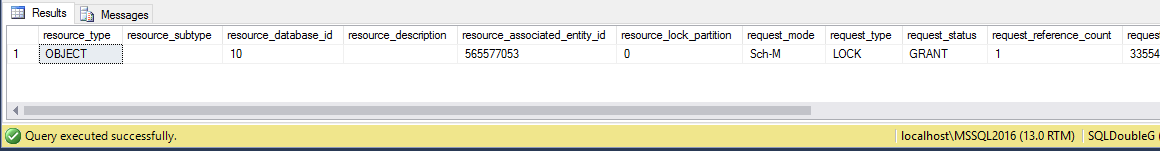

While our transaction is running the table is not accessible by any other connection, so the rest of your users have to wait.

In another window you can run

USE var_to_nvar GO SELECT * FROM sys.dm_tran_locks WHERE resource_associated_entity_id = 565577053

Schema Modification Lock (Sch-M) is not compatible with any other lock, so bad luck if someone wants to get any of that data.

But you can say «hey, you didn’t commit your transaction!» and that is true, only for the purpose of seeing the lock, because to be honest, 100 rows won’t make your life complicated, but if you keep reading I’ll show you all the changes behind the scenes and you can see by yourself how much work for this little number of rows.

Let’s get back and COMMIT our transaction and then check again how our clustered index look like.

COMMIT

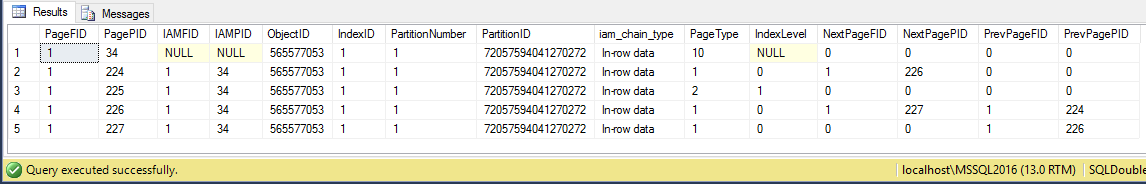

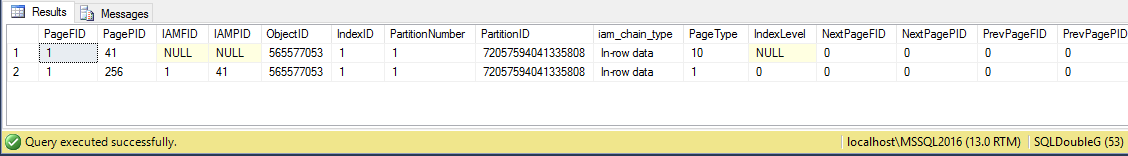

DBCC IND ('var_to_nvar', 'dbo.t', 1)

Now we see there are 2 levels in our index and 3 data pages (page type 1) as opposed to one page we had before, and if we check one of those, we can see how a row looks like after the change.

DBCC TRACEON (3604) DBCC PAGE(10, 1, 224, 3) /* Slot 0 Offset 0xe3f Length 131 Record Type = PRIMARY_RECORD Record Attributes = NULL_BITMAP VARIABLE_COLUMNS Record Size = 131 Memory Dump @0x00000026EE67AE3F 0000000000000000: 30000800 01000000 03000002 00370083 00616161 0............7..aaa 0000000000000014: 61616161 61616161 61616161 61616161 61616161 aaaaaaaaaaaaaaaaaaaa 0000000000000028: 61616161 61616161 61616161 61616161 00610061 aaaaaaaaaaaaaaaa.a.a 000000000000003C: 00610061 00610061 00610061 00610061 00610061 .a.a.a.a.a.a.a.a.a.a 0000000000000050: 00610061 00610061 00610061 00610061 00610061 .a.a.a.a.a.a.a.a.a.a 0000000000000064: 00610061 00610061 00610061 00610061 00610061 .a.a.a.a.a.a.a.a.a.a 0000000000000078: 00610061 00610061 006100 .a.a.a.a.a. Slot 0 Column 1 Offset 0x4 Length 4 Length (physical) 4 ID = 1 Slot 0 Column 67108865 Offset 0x11 Length 0 Length (physical) 38 DROPPED = NULL Slot 0 Column 2 Offset 0x37 Length 76 Length (physical) 76 var_col = aaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa */

Here we can see something very important to be taken in mind, after issuing ALTER TABLE and changing the data type, the column with the new data type is added after the old one, which is left behind and only logically removed (see DROPPED = NULL), that makes our storage requirements grow quite a lot (hence now we have 3 data pages)

If we then rebuild the index we can see how the column with the old data type is not populated to the new structure and therefore we only use the minimum space needed.

SQL Server 2016 to the rescue

If you are running SQL Server 2016 (I suspect only Enterprise or Developer Edition) you will have some relieve for this pain, there is an ONLINE option, which does not do what is says on the tin, but somehow helps a lot.

Let’s try it, you can drop the database and get to this point to see it.

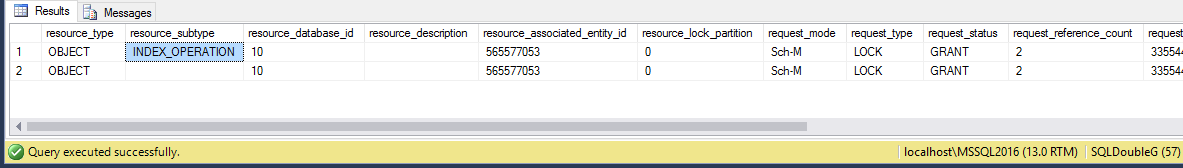

BEGIN TRAN ALTER TABLE dbo.t ALTER COLUMN var_col NVARCHAR(255) WITH (ONLINE = ON)

The operation I said IT IS NOT ONLINE, as the we still have the Schema Modification lock, furthermore, we have two

And this is because this way (ONLINE) it will rebuild the index, so we don’t leave behind all that junk in our data pages.

See how the index is just one page as it used to be (because 100 rows still fit in one page),

COMMIT

DBCC IND ('var_to_nvar', 'dbo.t', 1)

And this page is a new page (see page Id) and looks brand new if we look inside.

DBCC TRACEON (3604) DBCC PAGE(10, 1, 256, 3) /* Slot 0 Offset 0x60 Length 35 Record Type = PRIMARY_RECORD Record Attributes = NULL_BITMAP VARIABLE_COLUMNS Record Size = 35 Memory Dump @0x000000D25C9FA060 0000000000000000: 30000800 01000000 02000001 00230061 00610061 0............#.a.a.a 0000000000000014: 00610061 00610061 00610061 006100 .a.a.a.a.a.a.a. Slot 0 Column 1 Offset 0x4 Length 4 Length (physical) 4 ID = 1 Slot 0 Column 2 Offset 0xf Length 20 Length (physical) 20 var_col = aaaaaaaaaa Slot 0 Offset 0x0 Length 0 Length (physical) 0 KeyHashValue = (8194443284a0) */

Completely clean.

Workaround

If you want to avoid some of these problems my recommendation would be to create a new table, dump the data, rename both old and new one and then remove the old one, which is basically what you get with the 2016 online hint, but better since you won’t be locking the table for longer than the milliseconds to change both names.

Conclusion

We have seen there are some problems when data exists in our table and we want to change a column’s data type (the more rows the bigger problem we might have). These are:

- Concurrency, ALTER TABLE will hold a Schema Modification lock until completes, so if we have a lot of data we will lock the whole table until the operation is completed, not allowing any access.

- Transaction Log Generation, there is a fantastic article by Paul Randal where is explained how expensive page splits are and this operation since we are increasing the length of every and each row in the table (if has data for that column) will result in page splits.

- Network, If you have AG or another HA/DR solution, all that generated transaction log will need to be moved across the network.

- Storage, this point might be the least critical as our databases usually are in constant growth, but good if you take it in mind.

- Fragmentation, on top of the above, once we have finished, our clustered index will be heavily fragmented, so an INDEX REBUILD would be necessary, adding more CPU, concurrency and storage requirements to clean up all that mess.

As always thanks for reading and feel free to ask any question you may have.

1 comentario sobre «Database design Matters, choosing the right data type»